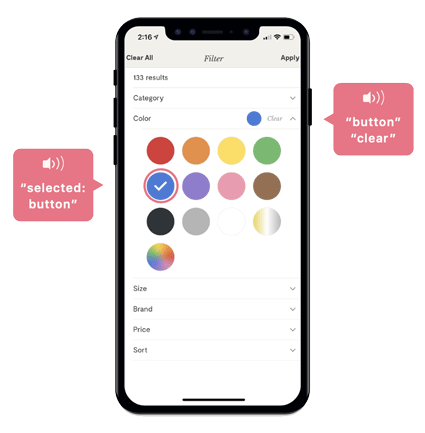

Supporting accessibility within software was new for me, but the experience has highlighted for me the importance of inclusivity within the products I build.

As part of this project, I researched how blind and visually impaired users engage with screen reader software to navigate the web. I came across inspirational stories and learned about what technology meant in the life of a blind user, and specifically how using a mobile device brought new opportunities to simultaneously connect and experience independence.

One of those stories was from Joy Ross, a blind YouTuber who lost her sight in her 20s. On her channel, Joy shares her experiences using tech, and in the video clip included, Joy shares what it meant for her as she used her iPhone for the first time. Joy reflects on the moment sending that first text message, she remembers crying, going on to explain how her phone has helped her feel like a normal part of society and the world.

In her words, her phone has become her eyes, transforming her life and giving her back the freedom she once lost, all because she can participate in the things others are doing.

Earlier in the video Joy talks about her experience on a popular social media site. In one experience, a feature allowing her to see engagement from her followers changed, and apparently leaving her screen reader without the ability to read "likes", and Joy without the ability to get feedback from her followers; an obvious source of connection for her.

"My iPhone has been my eyes for me, it has changed my life; I can be a normal person and function in society"